Have a standardized format for reporting issues. I have found it to be a good practice to write issues in a way that explains the full picture, such that, for example, an executive who is not closely involved with the game or product can have a full understanding. This may include a brief explanation of the current design and intent, followed by the behavior exhibited by participants, and finally the outcome of how participants interacted with design. It is important to make it clear how the intent is misaligned with how users actually perceived or interacted with the design.

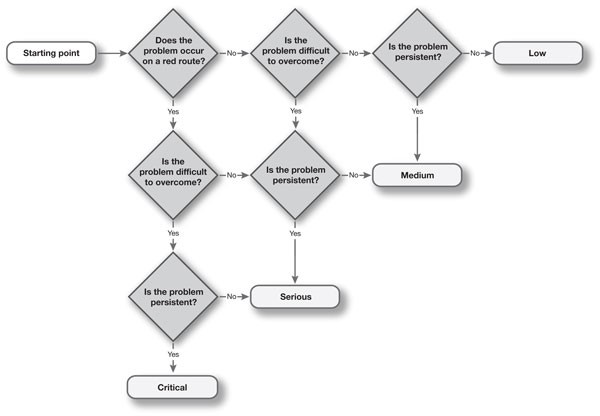

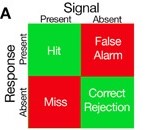

Have a system for addressing and labeling the severity of issues. This allows you and your team to clearly establish the difference between an issue that needs to be fixed as soon as possible and something that was a minor nuisance. This may be some sort of labeling system, such as A, B, C, D, with A being the most critical issue, whereas D is a low priority. Similarly, you may use “Critical, Medium, and Low” or “1, 2, 3” to the same effect. For a visual example, see the picture below, which uses a “Critical, Serious, Medium, Low” severity scale (source: User Focus).

Use consistent language to communicate issues. Using a consistent language to communicate issues benefits the development team in terms of knowing what to expect and not needing to re-learn report language every time. It’s also beneficial to the research team as it helps report delivery become faster as there may be less debate about how to best communicate issues. Some useful additions I’ve found include heuristics (e.g.,

https://www.nngroup.com/articles/usability-heuristics-applied-video-games/) and AXP & Accessibility pattern language (https://accessible.games/accessible-player-experiences/).

Use mindful terminology for suggestions/recommendations. If the development team(s) you work with is receptive to recommendations or fixes, use terminology like “consider” and “might be beneficial” when communicating issues. This emphasizes that they are the design experts and you, as the researcher, are simply suggesting a solution and not saying “this is how it should be fixed and it’s the only possible way.” Generally, your main objective is to find and identify problems, and this can reiterate that.

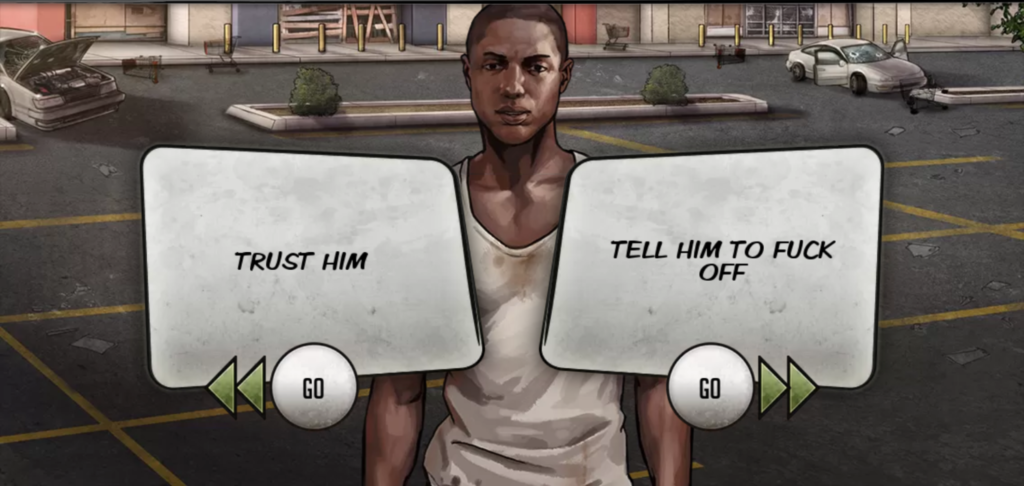

Blame the design, not the participant. I think it’s important to emphasize that user research reports are aiming to improve the game design and that should be reflected through your writing. I’ve encountered numerous instances where developers would question whether an issue written in a report was actually an issue or if we simply had an outlier as a participant. With video games being enjoyed by so many people all over the world, one person encountering a usability issue in a lab setting translates to thousands, maybe even millions out in the wild. Therefore, try to avoid issue titles such as “participants did not understand,” but instead title it “feature X is not clearly communicated.” Not only is the former title seemingly placing blame on the participants as if they did not interact with the game correctly (spoiler: there is no wrong way to play a game), but people quickly reading through the report who only see titles will be left asking “why?” rather than knowing there is an issue with feature X. Additionally, not only is it not glance-able, but “participants did not understand” is the outcome, not the issue.

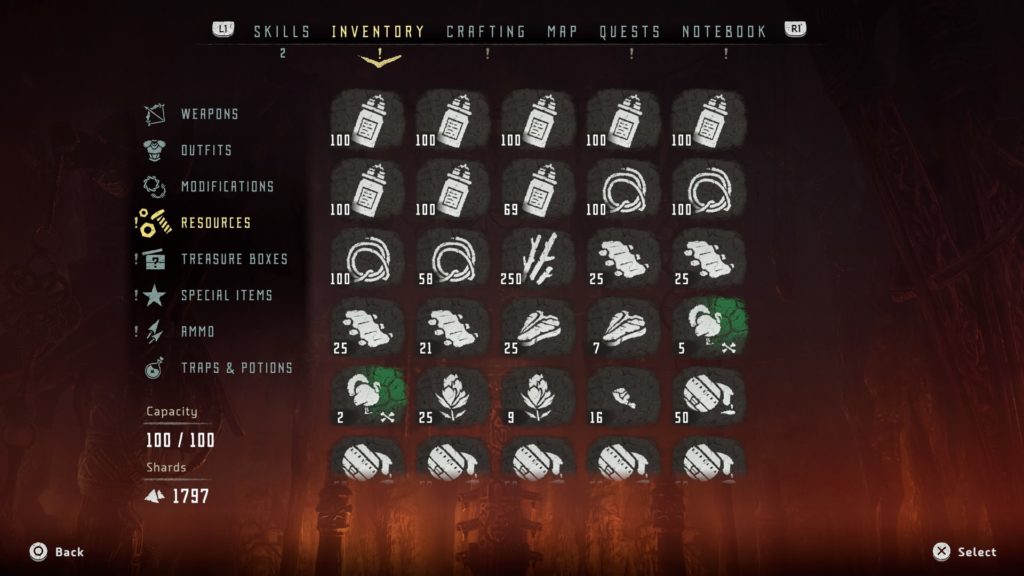

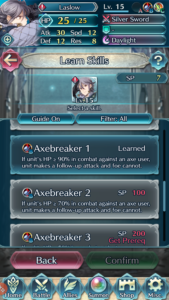

Incorporate further evidence of the issue. In addition to your written explanation, things such as a screenshot, video clip, or gif can paint a clearer picture of what the issue is and help provide more context. Which leads to the next tip…

Humanize your reporting. If your data collection allows for the recording of participants while they interact with your game or product, including some relevant clips can help development teams further visualize actual humans struggling with their design, which can help motivate them to find a solution to fix it.

Provide summaries that address how the experience is affecting the player rather than what features are not working well. Researchers generally have a good idea of the design intent before testing but, depending on the scale of the game, you may have a lot of stakeholders who have differing ideas of the intended experience should be. Further humanizing your reports by stating how participants’ motivations, behaviors, and perceptions are affected can help developers think more about it as an experience that’s impacting someone rather than a product with a set of features. It’s rarely as simple as “fixing feature X will help players feel more competent during gameplay” and a more holistic, rather than reductionist, view of the user experience can help get that point across.

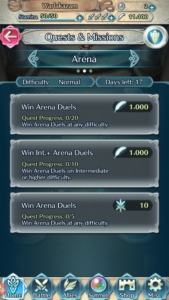

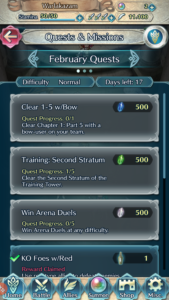

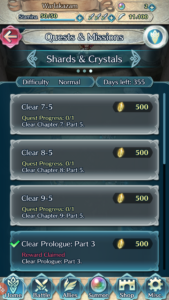

Differentiate between participants in the study and the proverbial player. If you are speaking specifically about something observed during a study, make it clear that you are doing so by referring to them as “participants.” For example, “Several participants were observed struggling to navigate the mission menu.” If you are speaking hypothetically about a specific interaction or experience, use the proverbial player. For example, “Players may have difficulty navigating the mission menu due to the low discoverability of the [Continue] button.”

Implement a system to indicate if an issue has been observed and/or reported during a previous study. This may be an additional text tag, such as “reoccurring”, or some sort of iconography indicating that something has been an issue that has been observed before. This not only further validates findings from previous research but might also motivate the development team to more quickly take action if they become tired of seeing participants encounter the same issues. It’s also additional evidence that can be referenced over time to build a case for elevating the severity of an issue.

Incorporate iconography for greater legibility. Using different icons to indicate different severity levels or types of issues written in reports can help your team more quickly read through a report. For example, if you want to make the team aware of something that was not necessarily an issue but something worth noting, you might label it “Note” and/or include a pencil or notepad icon to indicate as such. Therefore, if someone is short on time and needs to skim the report for important information that is only relevant to them, they can use the icons as reference points.